Virtual Memory: A Deep Dive into Page Tables, TLBs, and Linux Internals

From page faults to NUMA topology: how the Linux kernel manages memory, and what that means for the performance of data-intensive systems.

A quick note before we begin: I’ve been quiet here for a while. Life happened, and I had to step away from publishing for longer than I expected.

This article is my way of getting back into rhythm. It is much larger than my usual pieces: roughly 25,000 words, compared to the 4,000–6,000 words I normally publish. I have been working on it for the last couple of months, and it is closer to a short book than a regular article.

Since this is a book-length deep dive, I have also prepared a beautifully typeset 60-page PDF version for readers who want to read it offline, highlight it, or keep it as a reference. Buying the PDF is also a direct way to support the work that went into this piece.

Thanks for sticking around. Now let’s get into virtual memory.

Virtual memory is one of those fundamental components of modern-day computing that is crucial to master for building and debugging high-performance data-intensive systems.

Normally, we think of virtual memory as a system that provides isolation at memory-level to processes, which means that the operating system (OS) can run mutiple processes concurrently without those processes interfering or corrupting each other’s data in memory. But, virtual memory does so much more than that, such as:

lazy allocation of memory through demand paging

copy-on-write for shared memory between processes, and fast process creation via fork

file I/O that avoids the page-cache-to-user-buffer copy using mmap

page reclaim, swap, and the page cache

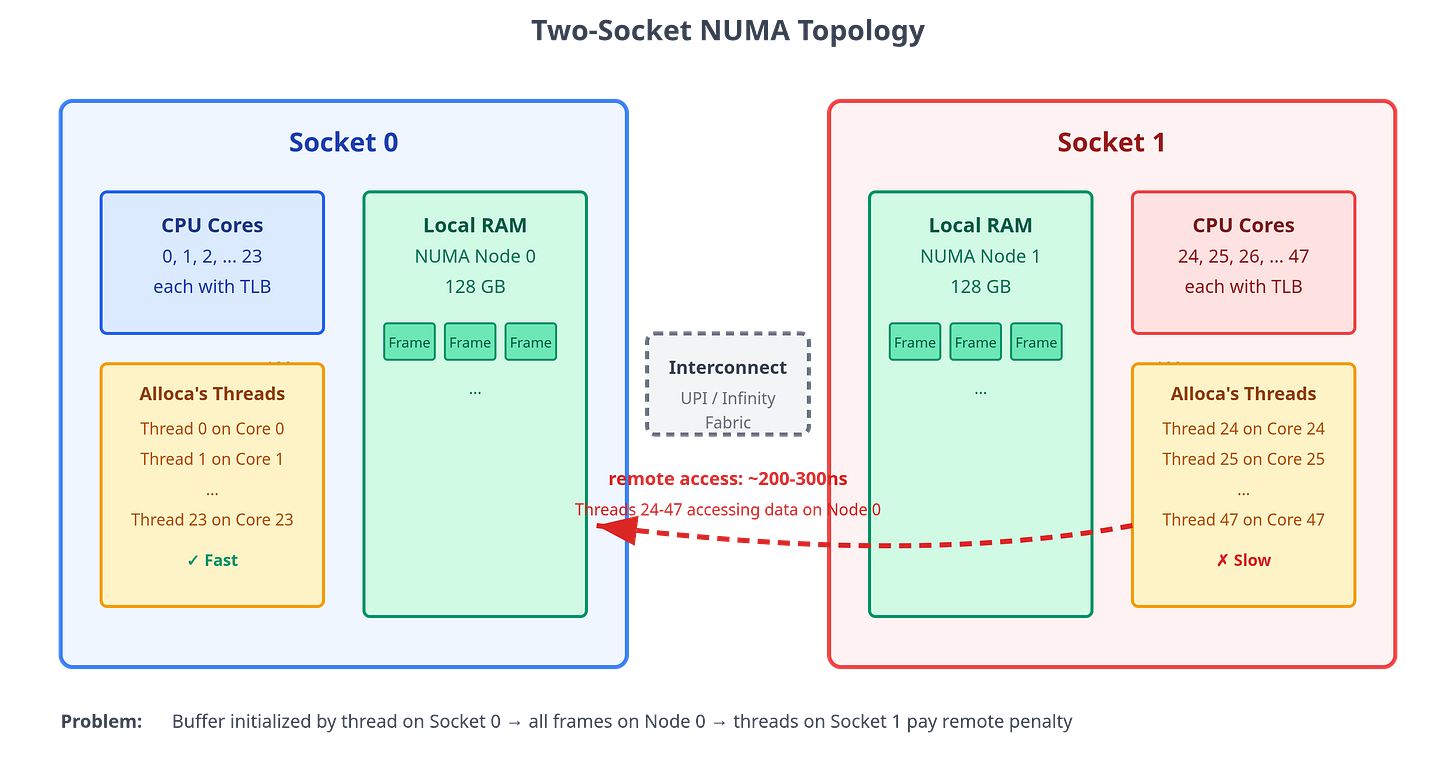

performance effects from access patterns, huge pages, TLB shootdowns, and NUMA placement.

This article is a broad, practical coverage of what virtual memory is, how it works, and how it affects performance of data-intensive systems. By the end of the article you will have a mental model and understanding of following key ideas:

Why virtual memory exists: Process isolation, memory protection, and the illusion of abundant memory.

The virtual address space: How a process’s memory is organized into segments (code, data, heap, stack, and memory-mapped regions).

Address translation: How virtual addresses are converted to physical addresses using hierarchical page tables, and why the page table hierarchy avoids wasting memory.

The role of hardware: How the MMU and TLB accelerate address translation, and why TLB hit rates matter for performance.

Demand paging: How the kernel delays physical memory allocation until pages are actually accessed, and how page faults drive this lazy allocation.

Memory types and reclaim: How anonymous, file-backed, shared, and tmpfs-backed pages differ, and why the kernel reclaims them differently.

Copy-on-write: How processes share memory efficiently and how fork creates new processes almost instantly.

Memory-mapped I/O: How

mmapmaps file data into a process address space, avoids an extra user-buffer copy, and enables shared memory between processes.Performance implications: How page size, TLB reach, and memory access patterns affect the performance of data-intensive workloads.

Observability: How to inspect VMAs, RSS/PSS, page faults, TLB behavior, and NUMA placement on Linux.

How to Read This Article

This article takes a different approach to teaching virtual memory. Instead of presenting a collection of facts and definitions, we explain concepts through a narrative: a series of dialogues between a newly created process named Alloca and the Kernel. Alloca encounters challenges as she executes her code, and the Kernel explains how things work in response to her questions. This dialogue-based format allows us to build understanding incrementally, introducing complexity gradually as natural questions arise.

Structure: Each section follows the same pattern: a dialogue that explores a concept in depth, followed by a Key Takeaway box that provides a formal summary, definitions, and technical details. If you prefer a quick overview, you can read just the Key Takeaway sections. If you want deep understanding, read the full dialogues.

Length and Pacing: This article is comprehensive, approximately 25,000 words covering everything from basic address translation to demand paging, page reclaim, copy-on-write, observability, and performance implications. Don’t feel obligated to read it in one sitting. Virtual memory is a complex topic with many interconnected pieces. Take your time, read it in multiple sessions, and let the concepts sink in. Each section builds on previous ones, so it’s designed to be read sequentially. Also, if you have taken a course in operating systems, the early parts of the article may seem a bit too basic to you. I encourage you to jump forward and directly read the parts that interest you, there is quite a lot of advanced content as well.

Implementation Details: Virtual memory concepts are largely universal across operating systems, but when we discuss specific implementation details, such as huge pages, TLB behavior, or page fault handling, those details are based on the Linux kernel and x86-64 architecture. Also, throughout the article we will talk about 4-level page tables that are still prevalently used in most kernels. Although, latest Linux kernel also supports 5-level page tables but it should be trivial to understand how that works if you master how 4-level page tables work.

Asides: While most of the article follows a narrative style of a dialogue between Alloca and the Kernel, there are certain additional details that I’ve sprinkled throughout the article in the form of asides.

Now, let’s meet Alloca and follow her journey through the virtual memory system.

The Need for Virtual Memory

As Alloca starts to execute her code, she encounters her first challenge. She needs to read some data from memory. The instruction contains the address of the data and Alloca thinks, “well, this shouldn’t be too difficult. I just need to go to this address and read the value”. But she is up for a huge surprise.

As she goes to that address, she finds that there is nothing there. It’s all just a facade. She stands there puzzled, wondering what she should do now. Then she sees a tall figure moving towards her from the shadows.

Alloca: “Who are you?”.

Kernel: “I’m the Kernel. I’m in charge of this entire world, I make sure that all processes do their job smoothly. What are you doing here? There is nothing at this place!”

Alloca: “I think I’m lost. I was supposed to read data from this address but it looks like it is all a facade, and I don’t know what to do now”.

Kernel (smiling): “I can understand the confusion. The address that you have is not a real address, it’s a virtual address.”

Alloca: “Virtual address? What does that mean?”

Kernel: “Well, what you think of memory is not the real physical memory, it is virtual memory. And, the address that you hold is a virtual address. What you need is the physical address to get the data from physical memory.”

Alloca: “What is virtual memory? Why not just give me direct access to physical memory?”

Kernel: “Let’s think about it from the first principles. I am responsible for the concurrent execution of not just you but hundreds of other process. You might not notice, but right now there are many other processes executing alongside you. If each one of you had direct access to physical memory, how would you coordinate who accesses which addresses in memory?”

Alloca: “That would be difficult because I don’t even know who else is executing, and I imagine processes come and go, so this would be impossible.”

Kernel: “Yes, that’s one problem. Even if you could talk to other processes, it would make the system extremely slow, because then on every memory access you would have to ask every process which addresses are available to use. And, it would also be a safety nightmare. A trivial bug in one process might corrupt another process’s data.”

Alloca: “I can see the problem. So how do you solve this?”

Kernel: “Through virtual memory! Basically, we have two problems to solve. First, Every process should be able to access memory without needing to worry if an address is in use by another process. Second, memory access should be safe without sacrificing performance.”

Alloca: “So, how does virtual memory solve these problems?”

Kernel: “Virtual memory is a software construct, it looks and feels like real memory, and it consists of addresses that you can read and write. I give every process its own private virtual memory space that it can freely navigate and manipulate without worrying about anyone else using that memory. This solves the first problem, it isolates memory for each process.”

Alloca: “But if these addresses aren’t real, then where do the reads and writes go? And, how is safety ensured?”

Kernel: “That part requires going into the weeds of how virtual memory works, but I will simplify for now. Because virtual memory is an abstraction, it can be controlled by me. So, I map the set of virtual addresses used by a process to a corresponding set of physical addresses. And, because I know which other processes are using which parts of physical memory, I can ensure that no two processes end up sharing the same physical addresses.”

Key Takeaway

The fundamental reason for virtual memory to exist is to provide memory-level isolation to processes. In a multitasking system where multiple processes can be running in parallel or in a time-shared manner, it is important that they don’t read or write each other’s data. By giving each process its own private virtual memory, the kernel ensures this never happens. Each process believes that it has full access to the entire physical memory, but in reality, it’s just virtual memory. Behind the scenes, the virtual memory is mapped to physical memory, and every process has a different mapping. Let’s learn how this mapping works in the next part.

A note on narrative accuracy:

In the scene above, Alloca consciously walks to an address and notices it’s a facade. That’s not literally how a process experiences memory. In reality, memory accesses are intercepted transparently by dedicated hardware (the MMU), and the Kernel, the process never notices any of this. But explaining that accurately requires understanding the MMU, page tables, and how the Kernel handles memory events, none of which we’ve covered yet. Starting there would be like defining a word by using the word itself. This is why we started with a simplified model. As we progress through the sections, we will gradually make our mental model more precise and accurate.

Size of Virtual Memory

Alloca now understands why virtual memory exists, but she still doesn’t understand how it works and what it looks like. Her questioning with the kernel continues.

Alloca: “If this memory that I see is virtual, does it mean that it is infinite?”

Kernel: “Not quite infinite, but very large. Tell me, what do you know about how addresses are represented in the CPU?”

Alloca: “Well, I know that on x86-64 systems, addresses are stored in 64-bit registers. So I suppose that means I can address 264 bytes?”

Kernel: “That’s what you’d expect, right? But there is a twist: while your addresses are indeed stored in 64-bit registers, not all those bits are actually used for addressing. Only 48 bits participate in the address translation.”

Alloca: “Why only 48 bits?”

Kernel: “It’s a pragmatic decision. Think about it: 48 bits gives you 248 bytes of addressable space, which is 256 TiB. That’s enormous! No application today needs anywhere close to that. The hardware designers decided that this was plenty for the foreseeable future, so they kept the address translation logic simpler by using 48 bits instead of the full 64. They left room to expand to 52 or 56 bits later if needed.”

Alloca: “So I have 256 TiB of virtual address space? That is huge! Can I use all of it?”

Kernel: “Ah, not quite. You can use only half of that, which is 128 TiB. I use the upper 128 TiB of that address space to map my own code and data into every process’s memory.”

Alloca: “You’re in my address space?”

Kernel: “I have to be! When you make a system call or when an interrupt happens, execution switches to kernel mode and starts running my code. If my code wasn’t already mapped in your address space, the CPU wouldn’t know where to jump to. So yes, I live in the upper half of every process’s address space. You can’t access my memory directly, but it’s there, ready for when execution needs to enter kernel mode.”

Alloca: “Okay, but how does such a huge virtual address space work because most machines have very small amount of memory installed, like 16 or 32 GB?”

Kernel: “That’s the beauty of virtual memory. Your virtual address space is completely independent of how much physical RAM is installed. Even if this machine has only 16 GB of RAM, your virtual address space still spans 256 TiB. The mapping from virtual to physical is where the two worlds connect, and that is managed by me. I take great care that these mappings remain within the limits of the installed physical memory.”

Key Takeaway

Because of the virtual nature of virtual memory address space, its size is much larger than the installed RAM. On the common 48-bit x86-64 virtual-address mode, the canonical virtual address range spans 256 TiB. Linux typically splits this into a lower user-space half and an upper kernel-space half. The lower 128 TiB is available to user processes, while the upper half is reserved for kernel mappings used when execution enters kernel mode. Physical address capacity is separate from virtual address capacity and depends on the CPU and platform.

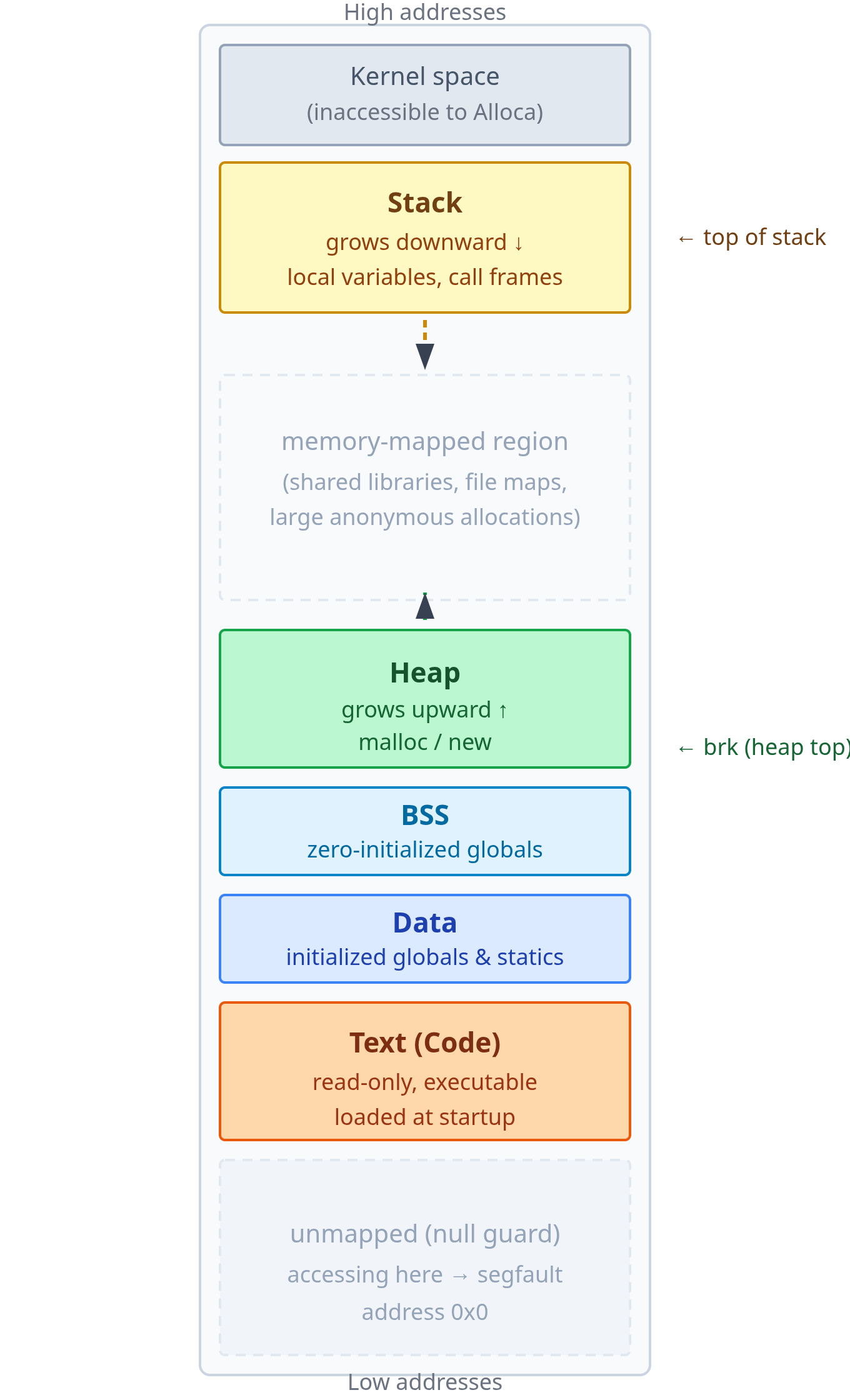

The Virtual Memory Address Space Layout

Alloca: “You mentioned that you map your code and data in the upper half of my address space. What is mapped in my half of the address space?”

Kernel: “Your half of the address space maps your code and your data.”

Alloca: “What does it look like? Is there a specific structure?”

Kernel: “Yes, there is a specific layout to your address space. It is organized in the form of segments, each designated to map certain kind of data. Let me show you how it looks.”

Kernel gestures, and Alloca can suddenly see a vertical map of her virtual memory

Kernel: “Down at the bottom, at low addresses, is your code. These are the instructions that you execute. This region is loaded when I created you. We call this the text segment.”

Alloca: “Makes sense. Above that I see there is data segment, I assume it maps all the other data?”

Kernel: “Not all the data, but a specific kind of data. Any global and static variables in your code that were initialized to non-zero values are loaded here. For example, if you created a constant

piwith value3.14, it will be in the data segment.”Alloca: “What about unintialized global data? Where does that go?”

Kernel: “The bss segment.”

Alloca: “Why a separate segment for that?”

Kernel: “Ah, it’s a clever trick for efficiency. Think about it: if you have a global variable that’s uninitialized, what value should it have when your program starts?”

Alloca: “Zero, I suppose.”

Kernel: “Exactly! Now imagine you have thousands of these zero-initialized globals. If we stored all those zeros in your compiled binary, the file would be bloated with zeros. That’s wasteful. So instead of doing that, the compiler and linker just make a note saying ‘hey, this program needs, say, 50 kilobytes of zero-initialized memory.’ They don’t actually put those zeros in the binary file. Then, when I load your program, I allocate that 50 KB, fill it with zeros, and map it into your address space as the BSS segment. Your binary stays small, loads faster, and you still get all your zero-initialized variables. Everyone wins.”

Alloca: “That’s clever! So the data and the bss segments are where all the static data goes. What about dynamic data? For example, when I add a new node to a linked list at runtime, does that memory get allocated in one of these segments?”

Kernel: “No, it can’t be. Think about it: can the data or BSS segments grow after your program starts?”

Alloca: “I guess not? You said their sizes are determined at compile and link time.”

Kernel: “Correct! They map your program’s static memory footprint based on everything the compiler knew from the code when it built your binary. But at runtime, you need to allocate memory dynamically. You might read a file and build a tree from its contents. The compiler had no way to know how much memory you’d need for that.”

Alloca: “So where does that memory come from?”

Kernel: “That’s what the heap is for. It sits right above BSS, and as you can see from the diagram, there’s a large stretch of empty address space above it.”

Alloca: “So the heap can grow into that empty space?”

Kernel: “Precisely! When you call malloc(), the allocator typically grows the heap upward by adjusting its upper boundary. We call that boundary the program break, or just

brkfor short. Each time you need more memory, the heap can expand upward into that unused region.”Alloca: “I see. But looking at the diagram, that empty region above the heap is enormous compared to everything else. The heap, stack, and all the segments look tiny by comparison. What is all that space?”

Kernel: “That space is basically the unmapped part of your address space.”

Alloca: “Unmapped? Why are there unmapped addresses?”

Kernel: “Glad that you asked, it’s really important to understand this part. Remember when we talked about the size of your virtual address space being 128 TiB?”

Alloca: “Yeah, you said that’s way bigger than the actual physical RAM in the machine.”

Kernel: “Yeah. A typical machine might have 16 or 32 GB of physical RAM. Even a beefy server with 256 GB of RAM is nowhere close to 128 TiB. So, it is not practically possible to map all of your virtual addresses to physical memory because there is simply not enough of it. And, even if there is a machine with 128 TiB of RAM installed, it doesn’t make sense to map all of it”

Alloca: “Why not?”

Kernel: “Because most programs probably use a few hundred megabytes at most, so the clever thing to do is to allocate and map only the required amount of memory to the process, leave the rest unmapped, and map it lazily based on demand.”

Alloca: “So what happens if I try to access one of those unmapped addresses?”

Kernel: “Well, if it’s an address I gave you, say from a successful

malloc()or mmap() call, then it’s yours to use. But if you just pick a random address in that unmapped region and try to read or write it, you’ll get a segmentation fault. The hardware will refuse the access because there’s no valid mapping.”Alloca: “Got it. So the unmapped region isn’t just empty space, it’s reserved space that can become mapped as needed?”

Kernel: “Exactly! And it gets mapped for several purposes. When you load a shared library, like

libc.so, I need to map its code and data somewhere in your address space. That middle region is where those libraries go. Same with file mappings: when you usemmap()to map a file into memory, it gets mapped here. Large allocations frommalloc()also often come from this region rather than growing the heap.”Alloca: “So it’s a flexible region for all kinds of dynamic mappings?”

Kernel: “Precisely! It’s the largest part of your address space, and it’s there to accommodate whatever dynamic memory needs arise during your execution.”

Alloca: “That leaves the stack at the top. What is that?”

Kernel: “It is a dedicated region for managing function calls. Every time you call a function, the stack is involved.”

Alloca: “Why does calling a function need its own memory region? Why not use one of the other segments?”

Kernel: “Let’s think about what needs to happen when you call a function. What kind of data does a function need?”

Alloca: “Well, its local variables, I suppose. And probably the return address so it knows where to jump back to when it’s done?”

Kernel: “Exactly! And also the CPU register values that need to be saved and later restored when the function returns. Now, all of this needs to be allocated when a function is called and cleaned up automatically when it returns. Which of the segments we’ve discussed could handle something like this?”

Alloca: “Not the data or BSS segments, those are fixed in size. They can’t grow and shrink.”

Kernel: “What about the heap?”

Alloca: “The heap can grow, but I’d have to explicitly

mallocandfree, right? That would be tedious, slow, and error-prone for every function call.”Kernel: “Yeah, what you need is a region that grows and shrinks automatically as functions are called and return. It needs to follow a very specific pattern: the last function you called is the first one that returns. Does that sound familiar?”

Alloca: “That’s… last-in-first-out. Like a stack data structure!”

Kernel: “Precisely! That’s why we call it the stack. The processor even has dedicated instructions,

pushandpop, that work with a special register called the stack pointer. This register tracks the current top of the stack. When you call a function, all its data (local variables, saved registers, return address) ends up on the stack. When you return, that block gets popped off. All automatic, no manual memory management needed.”Alloca: “So it’s about automatic lifetime management for function-local data. But what happens if there is a very deep chain of function calls? Can the stack grow indefinitely?

Kernel: “Not quite. As one function calls another, space needs to be made on the stack to accommodate the local variables of the called function. But there is a limit to how much the stack can grow. For example, on x86-64, the default configured maximum size of the stack is 8 MB.”

Alloca: “But as I can see, the stack is right at the top of the address space, where does it have room to grow?”

Kernel: “Good observation! The stack is usually mapped at the higher address range and it grows by moving towards the lower address ranges. So, for example, if the stack pointer is currently 0x120008 and you push an 8 byte value on the stack, the stack pointer becomes 0x120000”

Alloca: “So the heap grows upward and the stack grows downward?”

Kernel: “Yes. The empty space between them is the buffer that lets both grow without colliding. In practice, a process runs out of one or the other long before they meet.”

Alloca: “Okay, I understand the layout now. But I’ve one final question about it, what is the need for such a layout? Why not simply store data anywhere you find space?”

Kernel: “Great question! There are two big reasons: performance and security. Which one would you like to hear about first?”

Alloca: “Let’s start with performance.”

Kernel: “Alright. Tell me, if you are reading a value from an array at index 5, what do you do after that?”

Alloca: “Well, I probably would read index 6, then 7, and so on? Most array processing is sequential like that.”

Kernel: “Exactly! And when you’re executing instructions in your code, you typically run them one after another, right? You’re not randomly jumping all over the place.”

Alloca: “Right, except for loops and function calls, it’s mostly sequential.”

Kernel: “Yes! This pattern of accessing nearby memory locations is so common that the hardware is designed around it. But, fetching data from physical memory is slow. Really slow. It can take hundreds of CPU cycles.”

Alloca: “That sounds terrible!”

Kernel: “It would be, if the CPU actually went to main memory for every single read. But it doesn’t. The CPU has a fast cache, smaller but much faster storage right on the chip. And this is the clever bit: when you read a value from memory, the hardware doesn’t just fetch that one value. It fetches an entire block around it, typically 64 bytes, called a cache line.”

Alloca: “So it’s betting that I’ll need the nearby data too?”

Kernel: “Precisely! And because of how you traverse arrays or execute sequential instructions, that bet pays off most of the time. The next value you need is already sitting in the cache, ready instantly. This is called spatial locality.”

Alloca: “Ah, so that’s why the organized layout helps! If my heap has all my data structures, and I’m traversing a linked list, the nodes are likely to be near each other in memory?”

Kernel: “Well, linked lists are actually a bad example, their nodes can be scattered all over the heap. But arrays, yes! And more importantly, think about your stack. When you’re executing a function, you’re constantly accessing its local variables. Because they’re all packed together in one stack frame, most of those accesses hit the cache.”

Alloca: “And the same applies to code in the text segment?”

Kernel: “Exactly. Your instructions execute sequentially, so the processor can even prefetch the next cache line before you ask for it. By keeping code separate from data, and keeping different types of data in their own regions, we maximize these cache-friendly access patterns.”

Alloca: “That makes sense! What about security? How does the layout help there?”

Kernel: “Let me ask you this: if an attacker managed to write arbitrary bytes into your heap, say through a buffer overflow bug, what’s the worst thing they could do?”

Alloca: “Um, corrupt my data structures? Make my program crash?”

Kernel: “That’s bad, but there’s something worse. What if those bytes they wrote were actually machine instructions? And what if they then tricked your program into jumping to that address?”

Alloca: “Oh no… then the CPU would execute their malicious code as if it were part of my program!”

Kernel: “Exactly. And without protection, they could also try to overwrite your actual code in the text segment, inserting a backdoor directly into your program.”

Alloca: “So how do we prevent that?”

Kernel: “By giving each segment permission bits. Think about what should be allowed for each segment. Should you be able to write to your code segment?”

Alloca: “No, the code is fixed! It shouldn’t change while the program runs.”

Kernel: “Right. So the text segment is marked read-only and executable: you can run code from it, but you cannot write to it. Now, what about your heap and stack?”

Alloca: “I need to read and write data there all the time. But I should never execute code from there, right?”

Kernel: “Perfect! The heap and stack are marked read-write but not executable. You can modify your data, but if someone tries to jump to an address in the heap and execute it, the processor will refuse and kill your process.”

Alloca: “So by separating code from data, we can enforce different permissions on each?”

Kernel: “Precisely. This is often called W^X protection (write XOR execute). Memory can be writable or executable, but not both. By organizing memory into distinct segments, we make this protection model clean and enforceable.”

Key Takeaway

The virtual address space is organized into several distinct segments:

Text (code) segment: The compiled instructions of the program. Loaded at startup, mapped read-only and executable. The process cannot write to its own code pages.

Data segment: Global and static variables that have been explicitly initialized. Size is fixed at link time.

BSS segment: Global and static variables that are zero-initialized. The binary stores no data for this region; the loader provides zero-initialized memory for it at startup.

Heap: The region for dynamic memory allocation (

malloc/new). Starts just above the data/BSS segments and grows upward for small allocations; its upper boundary is called the program break (brk). Many allocators also usemmapdirectly for large allocations rather than growing the heap viabrk.Memory-mapped region: A large, flexible area in the middle of the address space used for shared libraries, file mappings, and anonymous large allocations. Libraries like

libcare loaded here.Stack: Holds the call frames of all currently executing functions. Starts near the top of the address space and grows downward. Each function call pushes a frame containing local variables, saved registers, and the return address; each return pops it.

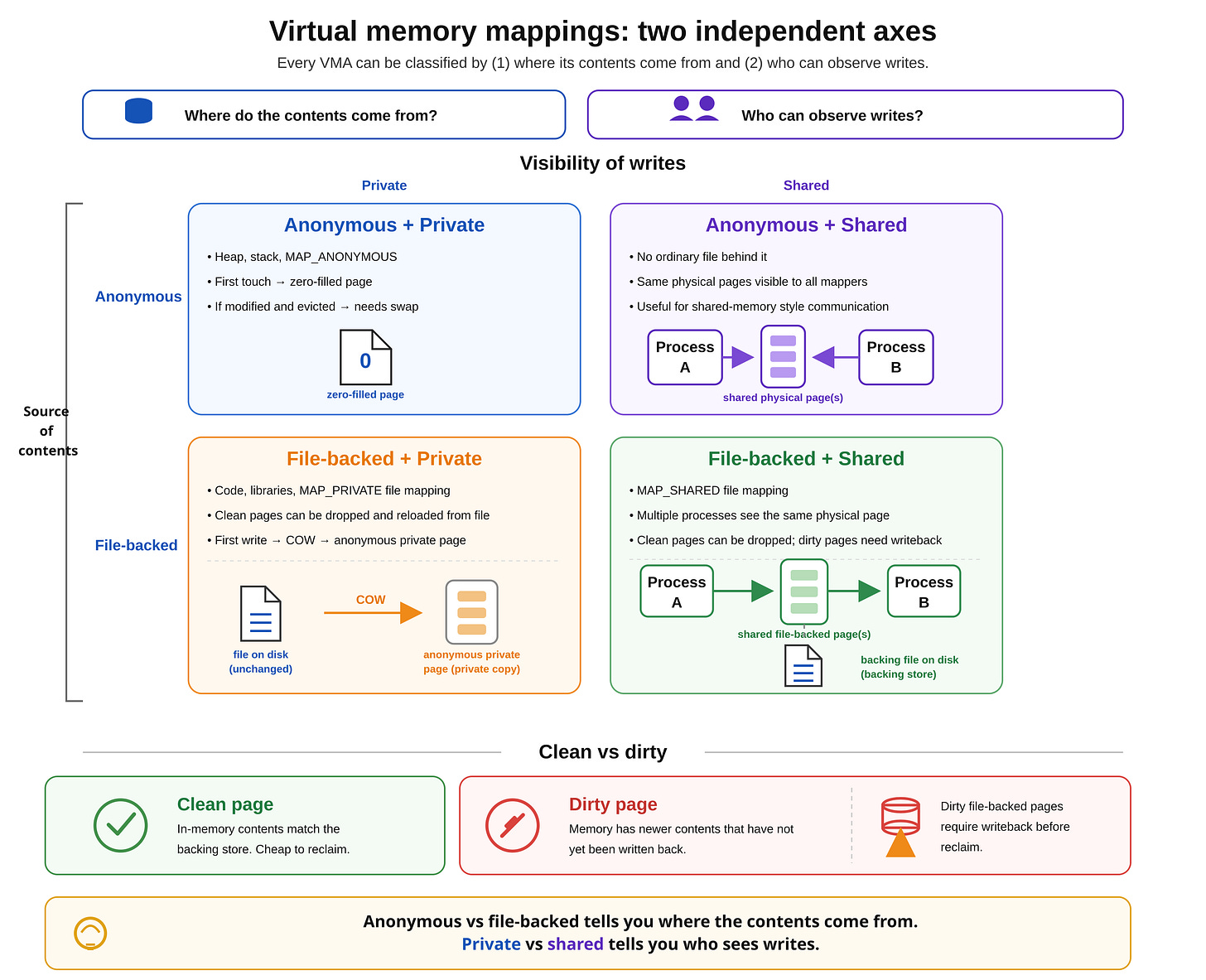

Aside: Anonymous memory

Throughout the article, we will come across a term “anonymous memory”, it is important that we understand what it means.

The kernel manages two kinds of memories:

Anonymous memory: this is allocated using

mallocormmapwith theMAP_ANONYMOUSflag. This is also the memory backing a process’s heap, stack and similar segments.File-backed memory: this is the memory which is backed by a file. You normally create it using

mmapand passing a file descriptor to it.

We will cover both of these in quite detail as we progress through the article, but having this common vocabulary will help us move faster.

How are Virtual Addresses Translated to Physical Addresses

Alloca: “I understand the layout. Code down here, stack up there. But these are all virtual addresses. How does a virtual address ever become real? I’m imagining you keep a table, virtual byte 0 maps to physical byte X, virtual byte 1 maps to physical byte Y, one entry for every address. Is that how it works?”

Kernel: “That’s the natural first thought. Let’s see what it costs. Your address space (the user-space half) is 128 TiB, that’s roughly 140 trillion bytes. At 8 bytes per table entry, a per-byte mapping table would take 1 PiB of storage per process. That’s impractical.”

Alloca: “So a per-byte table is out. But you do need a lookup of some kind.”

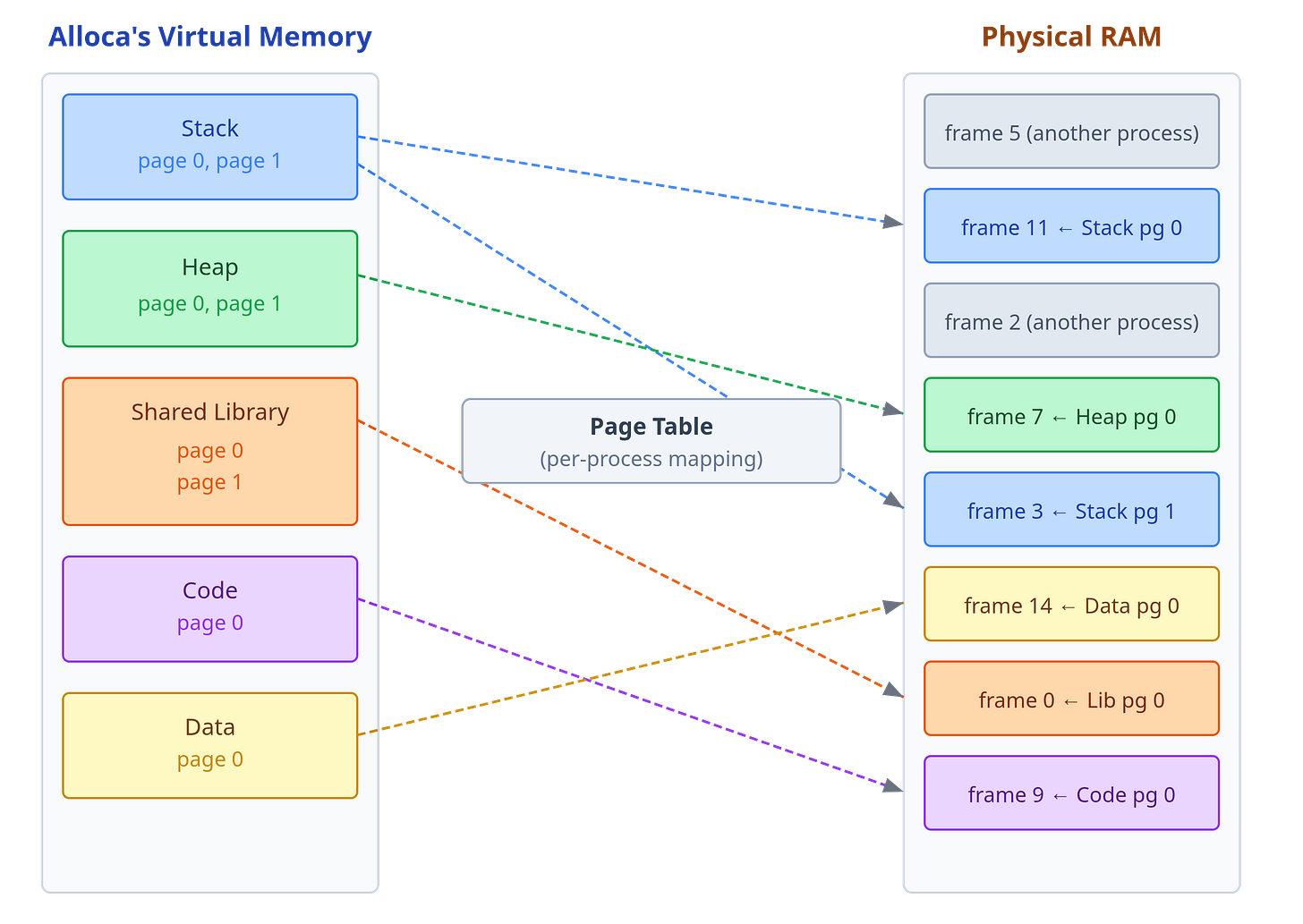

Kernel: “Yes, we do. But, instead of mapping individual bytes, we map fixed-size chunks. I divide your virtual address space into fixed-size chunks called pages, and I divide physical memory into same-sized chunks called frames. Each virtual page maps to one physical frame at a time. One table entry per page, not per byte. This way we don’t waste too much space maintaining the mapping itself.”

Alloca: “How large are these chunks?”

Kernel: “4 kilobytes. At that size, your 128 TiB address space divides into 235 pages.”

Alloca: “Wait, why 4 kilobytes specifically? Why not map smaller chunks like 1 kilobyte, or larger ones like 64 kilobytes?”

Kernel: “Good question! Let me ask you this: when you read a variable from memory, say an integer, do you usually read just that one value and nothing else nearby?”

Alloca: “Well, no. If I’m reading

array[5], I probably readarray[6]andarray[7]soon after. And when executing code, I run instructions sequentially, one after another.”Kernel: “Exactly! Memory accesses happen in clusters, spatial locality again. The hardware already exploits this with 64-byte cache lines; pages work the same way at a coarser scale. 4 KB is a sweet spot: large enough that related data usually falls within the same page, but also small enough that we don’t waste physical memory when only part of a page is touched.”

Alloca: “So 4 KB is a sweet spot between granularity and efficiency?”

Kernel: “Right. And because every page and every frame is exactly the same size, any free frame can back any page. It doesn’t matter where in physical memory that frame happens to sit.”

Alloca: “Okay, I understand the page size. But there is something that I still don’t get: you’re mapping an entire 4 KB page to an entire 4 KB frame. But, I have a specific address, and I want to read 8 bytes from it. How do you find out which virtual page that address belongs to, to get the corresponding physical frame?”

Kernel: “The answer lies in the virtual address itself. Think of it like a library call number. When a librarian gives you the number 3-07-42, you know immediately that the book is on floor 3, rack 07, shelf 42. The number encodes two things at once: which shelf unit to find, and where within that unit to look. A virtual address works the same way. It encodes which page the address falls in, and the byte position within that page.”

Alloca: “So the address itself tells you both the page and the position inside it?”

Kernel: “Yes. Every virtual address is implicitly two things: the virtual page number, given by the upper bits, and the page offset given by the lower 12 bits. 12 bits because 2¹² = 4096, one for every byte in a page. Say your address points 500 bytes into page N. When I map page N to physical frame M, your data is still 500 bytes in, because the frame is the same 4 KB size. The offset does not change during translation. So I look up the virtual page number in your page table, get back the physical frame number, attach the same offset, and that gives the physical address of exactly the 8 bytes you asked for.”

Alloca: “Okay, I understand that part. But something is still not clear. You said that my address space is 128 TiB. If there’s one page table entry per 4 KB page, that’s 235 entries. At 8 bytes each entry, that’s 256 GiB of page table. Per process. That’s not workable.”

Kernel: “Exactly, that’s the problem with a flat table. So let me ask you this: what if, instead of tracking every single page, we tracked which regions of your address space are in use?”

Alloca: “Regions? Like groups of pages?”

Kernel: “Yes. Think about your address space. You have code at the bottom, a heap above that, maybe some libraries in the middle, and a stack at the top. Most of the space between them is empty, right?”

Alloca: “Right, huge stretches of unused addresses.”

Kernel: “So what if I had a high-level index that just tracks which large regions are in use, and then within each of those regions, I have another index for smaller regions, and so on, until I get down to individual pages?”

Alloca: “Like… a tree structure? Where each level zooms in on a smaller portion?”

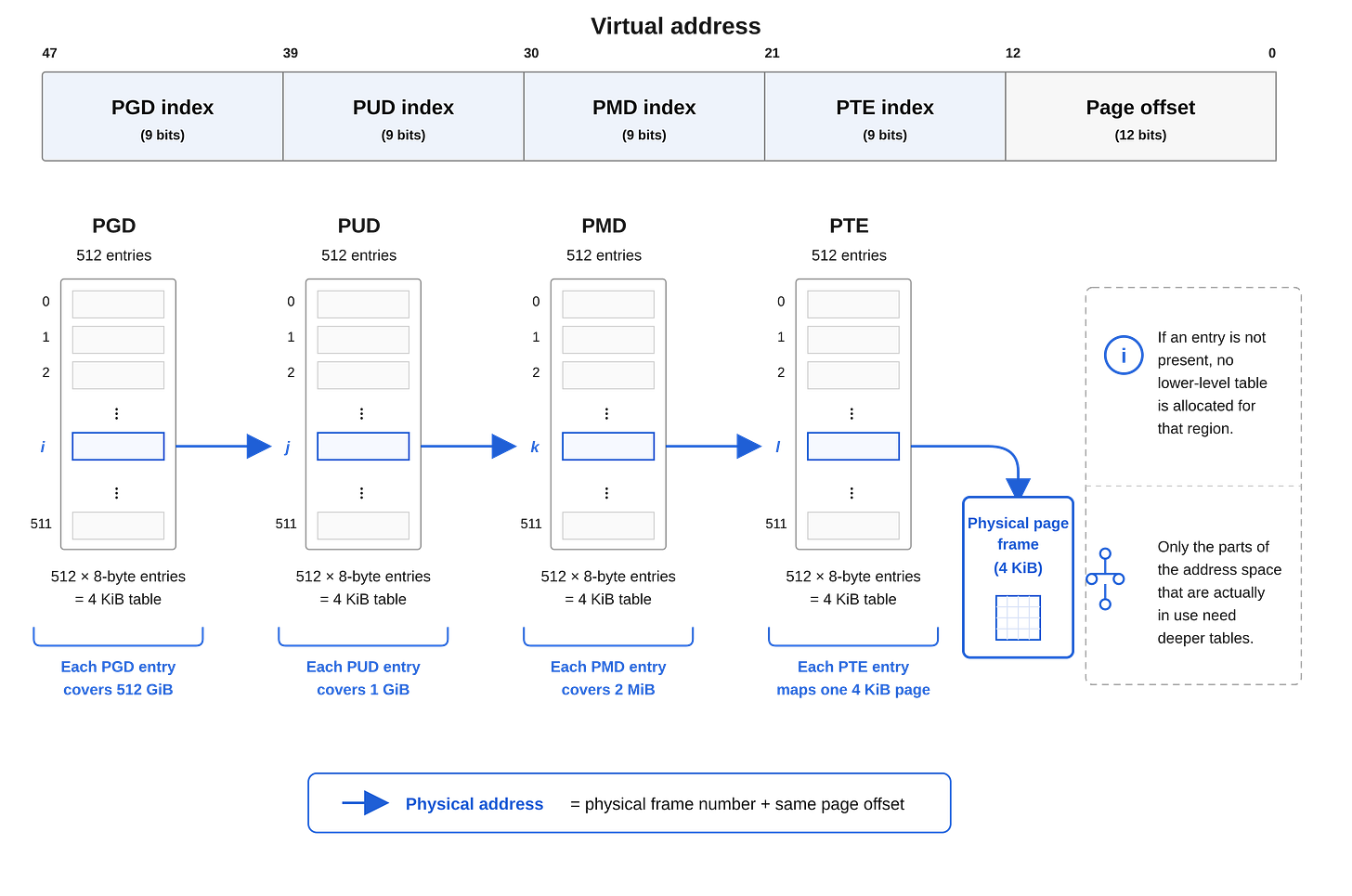

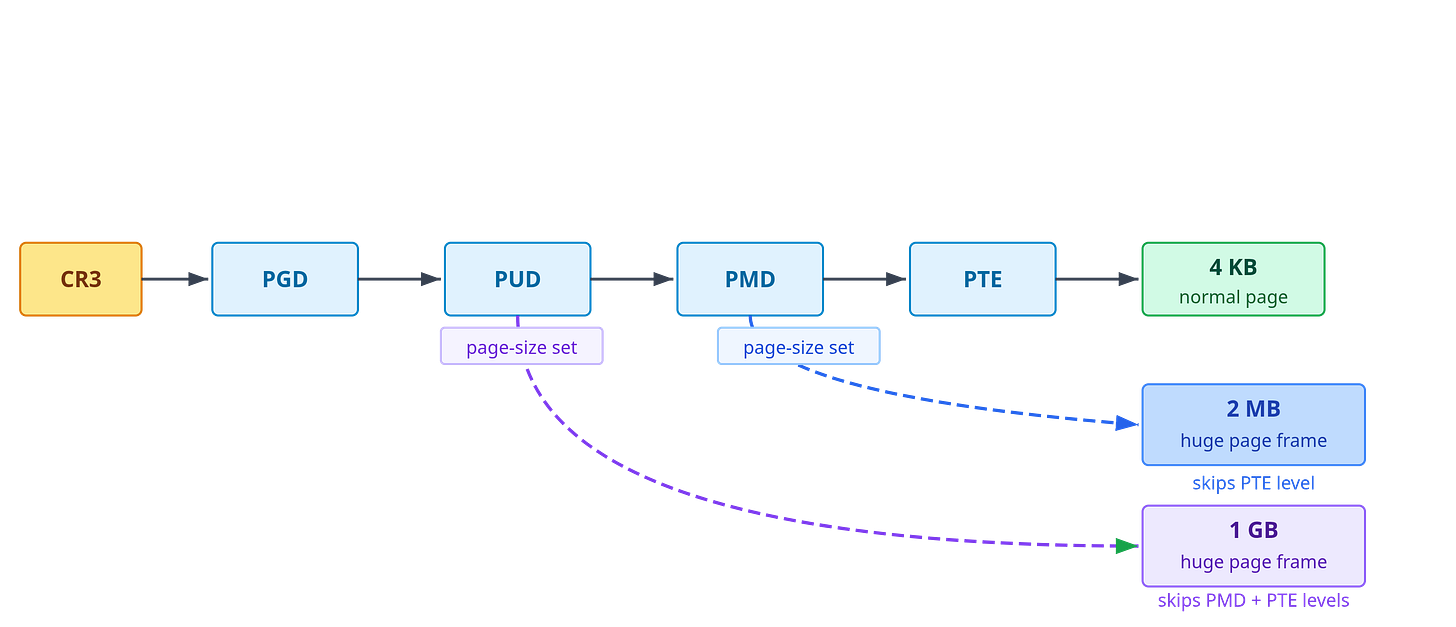

Kernel: “Precisely! It’s called a hierarchical page table. There are four levels. At the top level, there’s a table with 512 entries, and each entry represents 512 GB of your address space. If an entire 512 GB region is unused, that entry is just marked absent, no further tables are allocated for it.”

Alloca: “So you only allocate the deeper levels of the tree for the parts I’m actually using?”

Kernel: “Yeah. Each entry at the top level can point to a second-level table, which again has 512 entries, each covering 1 GB. Each of those can point to a third-level table covering smaller regions, and so on, until the deepest level maps to individual 4 KB pages.”

Alloca: “But wait, doesn’t having four levels still waste space? If I use just one page, don’t you still need entries at every level to reach it?”

Kernel: “Yes, but consider the scale. For that one used page, I need one entry in the top-level table, one second-level table with 512 entries, one third-level table with 512 entries, and one fourth-level table with 512 entries. That’s roughly 12 KB total. Compare that to a flat table: 235 entries times 8 bytes equals 256 GiB. I save a factor of 20 million.”

Alloca: “So the table itself only exists for the parts of my address space I’ve actually used.”

Kernel: “Correct!”

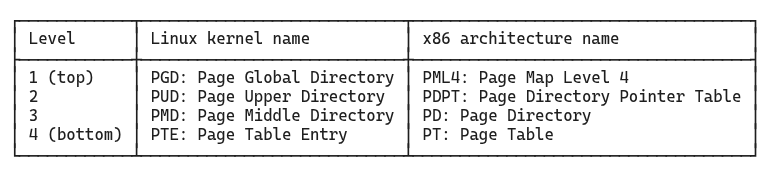

Aside: Page table level names difference between Linux and x86

The four levels of the page table hierarchy have different names depending on whether you’re reading Linux kernel source or Intel/AMD architecture manuals.

The x86 names are tied to the specific architecture. The Linux names are more generic and are used consistently across architectures that Linux supports, whether that’s x86-64, ARM64, or RISC-V, even when the underlying hardware has a different number of levels. Throughout this article we use the Linux kernel names: PGD, PUD, PMD, and PTE.

Alloca: “But how does a virtual address help you traverse this?”

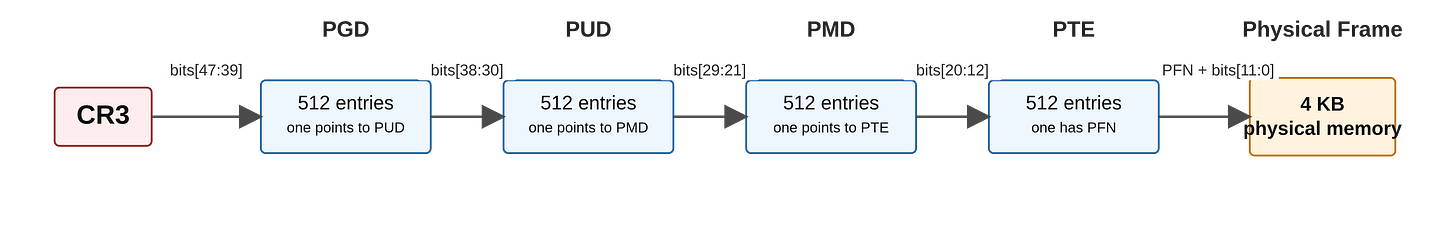

Kernel: “It’s actually pretty clever. Your virtual addresses are 64 bits wide, but only 48 bits are used. Those 48 bits are split into five parts: four groups of 9 bits each, followed by a 12-bit offset. The first four groups are used one by one to step through each level of the page table tree, narrowing down to the right physical frame. The offset is then used to pinpoint the exact byte within that frame.”

Alloca: “What is the exact split of these bits?”

Kernel: “The first group (bits 47 down to 39) gives a number between 0 and 511, which I use as an index into the PGD. That entry points me to a PUD. I take the next group (bits 38 down to 30) and index into that PUD, which points to a PMD. I repeat this for the PMD and PTE levels.”

Alloca: “That leaves the bottom 12 bits, those act as offset within the page frame?”

Kernel: “Yes, once you reach the PTE and get the physical frame number, you combine it with those 12 bits to get the exact byte you want. 12 bits because 212 is 4096, the page size.”

Aside: 48-bit virtual addresses

On common 4-level x86-64 systems, virtual addresses are stored in 64-bit registers, but you should have noticed that only 48 bits participate in this address translation scheme, what about the top 16 bits?

The top 16 bits must be a sign-extension of bit 47: all zeroes for low-half user-space addresses, all ones for high-half kernel-space addresses. Such addresses are called canonical addresses. A non-canonical address faults before the normal page-table walk even completes. This is what creates the large unused gap between the low and high halves of the 64-bit virtual address space.

Recent x86-64 processors and Linux kernels also support 5-level page tables, which use 57 bits for address translation (adding a fifth level called P4D (Page 4th Directory) between the PGD and PUD). This provides 2⁵⁷ bytes (128 PiB) of virtual address space per process. The additional level uses bits [56:48] as an index, with bits [63:57] remaining as sign-extension of bit 56.

Alloca: “I see how the bits map to the levels. But who actually performs this translation? On every memory access, something has to look up these tables.”

Kernel: “A dedicated piece of hardware called the Memory Management Unit, or MMU. It intercepts every address you issue. You never see any of this; to you it appears as if you are reading directly from your virtual address.”

Alloca: “So the MMU does this lookup automatically on every memory access? How does it know where to start?”

Kernel: “The CPU has a register called

CR3that holds the physical address of your current PGD, the top-level table. I update it on every context switch so the MMU knows which process’s tables to use.”Alloca: “And then it uses the bits from my address to walk through the levels?”

Kernel: “Yeah, the same bit fields we just covered. Bits [47:39] index into the PGD, [38:30] into the PUD, [29:21] into the PMD, and [20:12] into the PTE. That last entry gives the physical frame number, which the MMU combines with the 12-bit page offset to produce the physical address.”

Alloca: “But this means every memory access now requires four table lookups. That’s four extra memory reads just to translate my address. Doesn’t that make every memory access slower than it should be?”

Kernel: “It would be, if we had to walk all four levels every time. But the MMU has a small, dedicated hardware cache called the Translation Lookaside Buffer, or TLB. Every time a page table walk completes successfully, the result is stored in the TLB: ‘virtual page P maps to physical frame F.’ The next time you access the same page, the MMU checks the TLB first. If it’s there (a TLB hit), the translation completes in a handful of cycles, with no table walking at all.”

Alloca: “And how often does that happen?”

Kernel: “Programs that reuse the same memory regions repeatedly, such as tight loops, frequently executed functions, reused buffers, tend to stay within a small working set of pages, keeping the TLB warm and page walks rare. But that is not a given. Access patterns matter a great deal.”

Aside: Working set

A process’s working set is the subset of its virtual pages that are actively needed during a given window of execution. It’s not a fixed quantity, it shifts as the program moves through different phases. A tight loop over a small array has a tiny working set: just the pages holding the loop instructions and the array. A database engine scanning a large table has a much larger one.

The working set matters for two hardware structures:

TLB: If the working set fits within the TLB’s capacity (typically a few hundred to a few thousand entries), translations stay cached and page walks are rare. If the working set exceeds TLB capacity, there are larger number of TLB misses which may cost performance.

Physical RAM: If the working set fits in RAM, pages stay resident. If it doesn’t, the kernel must evict pages to swap and reload them on demand, which is a far more expensive operation (we cover eviction and swap later in the article).

Keeping the working set small and stable is one of the most effective things a program can do to improve memory performance.

Key Takeaway

Virtual memory operates at the granularity of pages (4 KB chunks of virtual address space) that map to frames (4 KB chunks of physical memory). Each virtual address encodes two pieces of information: the virtual page number (upper bits) and the page offset (lower 12 bits). The offset stays the same during translation; only the page number changes to a frame number.

On x86-64, the kernel uses a four-level hierarchical page table to perform this mapping. The structure has four levels named PGD (Page Global Directory), PUD (Page Upper Directory), PMD (Page Middle Directory), and PTE (Page Table Entry). A 48-bit virtual address is divided into four 9-bit index fields (one per level) plus a 12-bit offset, as shown in Figure 2. The hierarchy is sparse: only the portions of the address space actually in use require allocated page table structures, avoiding the 256 GiB overhead of a flat table.

Because each virtual page is mapped independently, there is no requirement that consecutive virtual pages land in consecutive physical frames. A process’s pages can be scattered anywhere in physical RAM, interleaved with frames from other processes, yet the process always sees a clean, contiguous address space. Figure 4 shows this concretely.

The Memory Management Unit (MMU) performs address translation in hardware. On x86, the register CR3 holds the physical address of the current process’s PGD. On every memory access, the MMU first checks the translation lookaside buffer (TLB) to see if the translation is already cached. If not, the MMU performs a full page table walk to do the translation and then caches the translation in the TLB.

Memory Protection via Permission Bits

Alloca: “Earlier you told me that my code segment is read-only. I can execute it but not write to it. But now that I understand the page table, I don’t see what actually enforces that. My code pages have entries in the page table just like everything else. What stops me from writing to them?”

Kernel: “Each page table entry carries more than just the frame number. It also holds permission bits. The writable bit says whether you can write to that page, if it is 0, the MMU refuses the write and faults: it stops the access mid-flight and signals me to handle the situation. The executable bit says whether you can run code from it. When I set up your code segment I mark those pages as executable but not writable. Your data and heap are writable but not executable. The MMU checks these bits on every access.”

Alloca: “What happens when it faults? Say I try to write to one of my code pages?”

Kernel: “I get called to handle it. A permission violation is almost always a bug or a security attack, so I typically terminate you.”

Alloca: “Got it. Are there other kinds of bits apart from permission bits?”

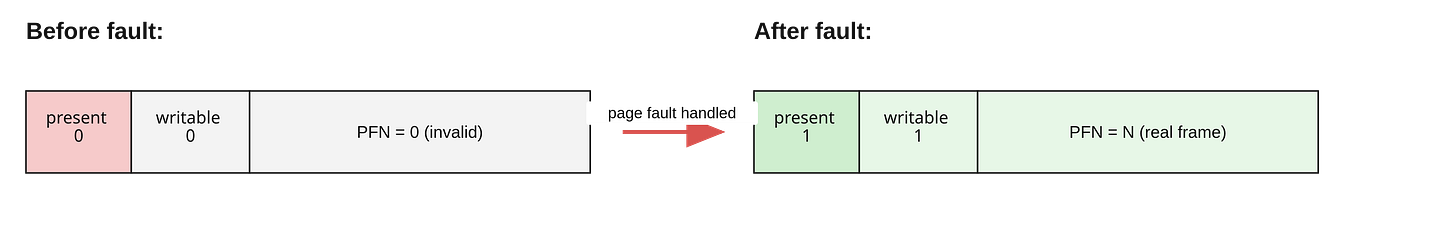

Kernel: “Yes, a very important one that you should know about. There is a present bit in every entry, at every level of the hierarchy. If it is 0, the walk stops there and the CPU faults. But a not-present entry doesn’t necessarily mean something went wrong. It might just mean that I haven’t allocated a physical frame for that page yet, or that the page has been evicted to disk.”

Alloca: “So the permission bits enforce boundaries between code and data, and the present bit tells you whether a page is backed by physical memory at all.”

Kernel: “Exactly!”

Key Takeaway

Each page table entry contains not just a frame number but also several permission bits that the MMU enforces on every memory access:

Present bit: Indicates whether the page is currently backed by a physical frame. If 0, the page table walk stops and the CPU raises a page fault. A not-present page doesn’t always signal an error; it might mean the kernel has promised the address range but hasn’t yet allocated physical memory for it (demand paging, covered in the next section). It might also mean that the physical frame was swapped to disk and reused by another process.

Writable bit: Controls write permission. If 0, any write attempt triggers a fault. Used to make code pages read-only and to implement copy-on-write (covered later).

Executable bit (or NX/XD bit): Controls execution permission. If the page is marked non-executable, the processor refuses to fetch instructions from it. Code pages are marked executable; data, heap, and stack pages are marked non-executable to prevent code injection attacks.

The MMU checks these permission bits on every memory access, before the access completes. Permission violations typically indicate bugs or security violations and usually result in the kernel terminating the faulting process. This hardware-enforced separation between code and data is a foundational defense against many classes of exploits.

Demand Paging

Some time passes. Alloca has been running her code and has grown more comfortable in this world. But now she needs more memory, she is about to process a large dataset and needs space to store intermediate results.

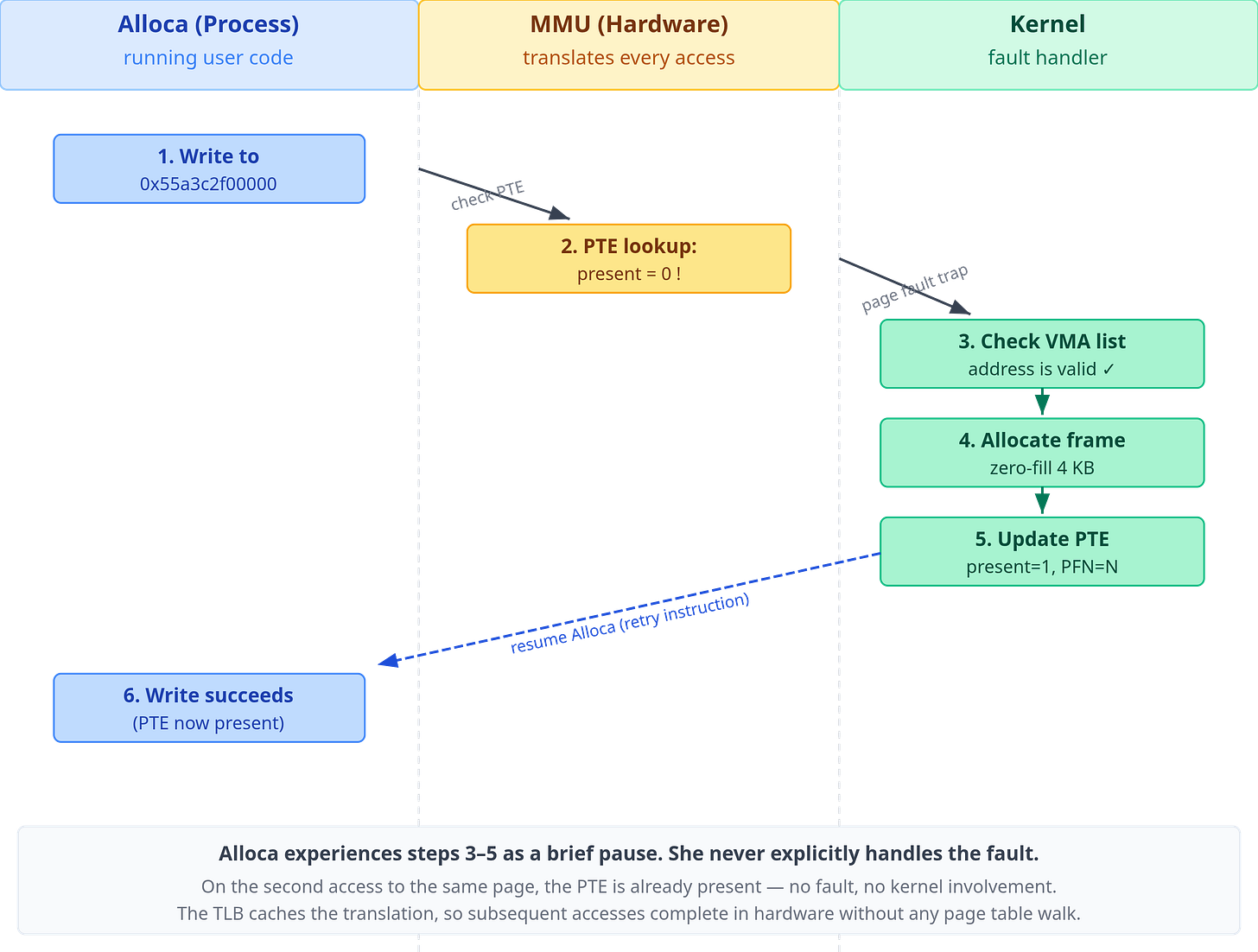

She does what any process would do: she makes a system call asking for memory. A new region appears in her address space. Kernel hands her an address: 0x55a3c2f00000. She immediately goes to write her first value there.

And then something strange happens. Time seems to stop for a fraction of a moment. And then it starts again, as if nothing had occurred. Her write went through. But something had happened, she had simply not noticed.

Alloca: “That was odd. Did I just… stutter?”

Kernel: “You did. You triggered a page fault. Don’t worry, I took care of it.”

Alloca: “A page fault? What’s that? And what did you take care of?”

Kernel: “When I gave you that address, I didn’t actually back it with physical memory. I recorded the promise that this range of virtual addresses belongs to you, but I didn’t go and find a physical frame to put behind it.”

Alloca: “You gave me an address without any memory behind it? That sounds like fraud.”

Kernel: “It’s efficiency. Think about it: you might ask for a hundred megabytes and only use ten. If I allocated a physical frame for every page you asked for, I’d be wasting most of physical memory on pages that never get touched. So instead, I wait. When you actually try to access a page for the first time, the MMU looks up that address in your page table and finds the present bit set to zero. No physical frame is mapped. The MMU raises a trap (a page fault) and control transfers to me.”

Alloca: “But how did you know my access was valid? Maybe I was accessing some address I had no right to. How do you tell the difference?”

Kernel: “When I gave you that memory region, I recorded a note called a virtual memory area, or VMA. It says: ‘virtual addresses from X to Y are promised to Alloca, with these permissions.’ The VMA is not a page table entry. It’s a higher-level record of intent that I maintain separately.”

Alloca: “So you have two different data structures tracking my memory?”

Kernel: “Yes. The VMA describes what address ranges are valid for you to access. The page table describes which of those valid pages are currently backed by physical frames. When you were created, I set up VMAs for your code segment, your data segment, your stack. Each one records an address range and what you’re allowed to do there: read, write, execute. Later, when you call

mallocormmap, I create a new VMA for that allocation. But I don’t immediately create page table entries for it.”Alloca: “So when the MMU finds a missing page table entry for an address, it triggers a page fault?”

Kernel: “Yes. When a page fault fires, I have to handle it. I first check whether the faulting address falls inside a valid VMA. If yes, the access is legitimate. I just haven’t backed it with a physical frame yet. If the address is outside any VMA, you’ve wandered somewhere you were never given. That’s a segmentation fault, and I terminate you.”

Alloca: “So the VMA list is your record of promises, and the page table is the record of fulfilments.”

Kernel: “Well put. Now, once I confirm the fault is legitimate, I find a pre-zeroed physical frame, write a new entry into your page table pointing to that frame, and resume your execution. The CPU retries the faulting instruction and your write goes through.”

Alloca: “Wait. Why did you zero it out? Couldn’t you just give me the frame as-is?”

Kernel: “Absolutely not. Physical frames get reused. That frame might have previously held data from another process. If I handed you that frame without clearing it first, you could read another process’s secrets just by reading uninitialized memory. The zero-fill guarantee is a security invariant: you will never see data you didn’t write yourself.”

Alloca: “That’s reassuring. But what if there are no free frames? What if physical memory is full?”

Kernel: “It happens more often than you’d expect, and dealing with it changes what the present bit in a PTE can mean.”

Aside 1: How the stack grows using demand paging

Remember, when talking about address space layout, we said the stack grows downward. That growth is demand-driven too. The kernel marks the stack VMA as growable, but it does not map every possible stack page upfront. When the stack pointer moves into the next valid page below the current stack, the access faults. Because the faulting address is just below the current stack bottom and the stack VMA is marked as growable, the kernel extends the VMA downward by one page, allocates a frame, and resumes execution. From Alloca’s perspective the stack just grew silently.

Two mechanisms prevent this from continuing forever. First, the kernel enforces a maximum stack size (on Linux, set by ulimit -s, defaulting to 8 MB). The stack VMA will not be extended past that limit. Second, below the maximum stack limit sits a guard page: a single page that is deliberately left unmapped, no VMA covers it. If the stack pointer jumps far enough to land in or past the guard page (due to deep recursion, a large stack-allocated array, or a corrupted stack pointer), the fault finds no covering VMA. The kernel treats that as an invalid access and delivers SIGSEGV.

The guard page is what turns a silent runaway stack into a detectable crash. Without it, the stack could silently overflow into the memory-mapped region below it and corrupt library or heap data before anything notices.

Aside 2: Memory overcommit: a consequence of demand paging

Demand paging creates an interesting situation: if the kernel only allocates physical frames at first-access time, then malloc(10GB) on a machine with 4 GB of RAM will succeed (at least initially). The kernel records the promise in a VMA and returns immediately. No frames are allocated. This is called overcommitting memory: the total size of all VMAs across all running processes can far exceed the amount of physical RAM plus swap.

The kernel’s bet is statistical. In practice, most allocated memory is never fully touched. A process might allocate a large buffer “just in case” and only ever write to a fraction of it. A JVM might reserve a large heap up front but populate it lazily. Across hundreds of processes, the working sets sum to much less than the total committed virtual memory, and the system runs fine.

The bet occasionally goes wrong. When too many processes start faulting in pages simultaneously, memory pressure spikes, and the kernel runs out of physical frames. At this point it invokes the OOM killer (Out-Of-Memory killer): a kernel subsystem that scores each process by its memory consumption, age, and other heuristics, then kills the highest-scoring one to reclaim its frames.

You can observe overcommit and OOM events on Linux:

# How much virtual memory is committed system-wide (in kB)

grep CommitLimit /proc/meminfo # kernel’s ceiling: overcommit_ratio × RAM + swap

grep Committed_AS /proc/meminfo # total virtual memory promised to all processes

# See if the OOM killer has fired recently

dmesg | grep -i “oom\|killed process”

journalctl -k | grep -i oom

The kernel’s overcommit policy is tunable via /proc/sys/vm/overcommit_memory:

0(default) uses heuristics1always allows any allocationand

2caps total committed memory atovercommit_ratio × RAM + swapand begins refusingmalloccalls that would exceed it.

Key Takeaway

When a process allocates memory, whether by calling malloc, growing its stack, or explicitly requesting memory via mmap, the kernel does not immediately back every page of that allocation with a physical frame. Instead, it creates a Virtual Memory Area (VMA) in the process’s memory descriptor: a record that says “this range of virtual addresses is valid and belongs to this process, with these permissions.” The page table entries for these pages are left absent (present bit = 0).

The VMA and the page table serve different roles:

The VMA is the kernel’s record of intent: what address ranges the process is allowed to access.

The page table is the record of reality: which virtual pages are currently backed by physical frames.

The first time the process reads or writes any address in an allocated-but-unmapped range, the MMU finds a page table entry with present=0 and raises a page fault, a CPU exception that transfers control to the kernel. The kernel’s page fault handler:

Looks up which VMA contains the faulting address. If none, the access is invalid and the kernel delivers a segmentation fault, terminating the process. Otherwise, it continues:

Allocates a free physical frame.

Zero-fills that frame (the zero-fill guarantee, required for security, ensures the process never sees data from a previous owner of that frame).

Installs a new page table entry pointing to that frame, with the present bit set.

Returns from the exception, causing the CPU to retry the faulting instruction.

From the process’s perspective, execution pauses for a few microseconds and then continues as if nothing happened. This mechanism is called demand paging: physical memory is allocated on demand, at the moment of first access, rather than speculatively at allocation time.

The fault described above requires no disk I/O: it is called a minor page fault. Minor faults cover any fault the kernel can resolve entirely in memory. This includes zero-fill for pages that aren’t backed by any file, but also cases where the data is already resident somewhere (in the page cache, or shared from another process) and just needs a PTE installed. There is a second kind of fault called major fault, that does require reading from disk. We will get to that next.

A side effect of demand paging is that physical frames are allocated one by one, on demand, from wherever free memory happens to be. There is no requirement that consecutive virtual pages land in consecutive physical frames. A process’s stack might occupy frames scattered across RAM, interleaved with frames belonging to completely different processes. The page table is what makes this invisible: it maps each virtual page independently, so the process always sees a clean, contiguous virtual address space regardless of where its frames physically reside.

Prefer reading this as a polished PDF? I’ve prepared a beautifully typeset PDF version for offline reading and reference. Buying it is another way to support the time that went into this article.

When Physical Memory Runs Out: Swap and the Dual Meaning of the Present Bit

Alloca: “So what happens when there is not enough free physical memory left to allocate?”

Kernel: “Let me show you. Let’s say that I need to allocate a frame for you, but they are all taken. So I must evict a page from somewhere, I look for a page that hasn’t been accessed recently. It could be from another process, or even one of your own pages. Once I find the page to evict, I write its contents to disk to a reserved area called swap space. Then I reclaim the frame and give it to you.”

Alloca: “And what happens if the process that owned that page tries to access it again?”

Kernel: “Before I give that frame to you, I update the process’s page table. I locate the PTE that points to that frame, clear its present bit to 0, and store the swap location in the remaining bits of the entry. The hardware never looks at those bits when present is 0, but I do when handling the page fault.”

Alloca: “So when that process touches the page again…”

Kernel: “The MMU sees the present bit is zero in the PTE, and it raises a page fault bringing me into action to handle it. My fault handler follows the same entry point as always: check the VMA first. In this case, because the page was swapped, its VMA must exist, so the fault handler moves forward and checks the PTE next. It finds the swap coordinates in the non-present bits, uses those to read the data from the disk, and loads it into a fresh frame. After that, it reinstalls the PTE with present=1. Once the page fault handler finishes, I resume the process and it retries the instruction that triggered the fault and this time it succeeds. It never knew the page had left.”

Aside: Minor vs Major Page Fault

Earlier in the demand paging section, we talked about minor page faults. Those kind of page faults don’t involve disk I/O and are handled directly in memory. For example, when malloc allocates more pages, the kernel simply creates the VMA, and allocates the physical frames on demand when the page fault occurs.

The page fault that we discussed above when a process tries to access a page that has been swapped to disk is a major page fault because handling it requires disk I/O.

Alloca: “So present=0 in a PTE always means that the data is in the swap?”

Kernel: “No. Swap is one destination, but it’s not the only one. A non-present PTE can point to data that lives somewhere other than swap space.”

Alloca: “Where else can it go besides swap?”

Kernel: “A file. Not every page comes from memory you allocated with

mallocor grew from the stack. Some pages map directly to content stored in a file on disk.”Alloca: “How does that work?”

Kernel: “You use the

mmapsystem call. It lets you map a file into your address space. When you do that, I create VMAs for the mapped range, but I leave the PTEs absent, just like withmalloc.”Alloca: “So on first access?”

Kernel: “This time again the MMU sees an absent PTE and raises a page fault. But handling this page fault is different from how I handle a page fault for a swapped page, or how I handle allocation of new memory like what we discussed when talking about demand paging earlier.”

Alloca: “What changes?”

Kernel: “The first step is the same, I check the VMA to confirm this is a valid region. But what happens next depends on the type of mapping.”

Alloca: “What’s different about a file-backed mapping?”

Kernel: “For anonymous mappings, a fault means either a fresh allocation where I hand you a new zero-filled frame, or a swap restore, where I read the page back from disk using the swap coordinates stored in the PTE. For file-backed mappings, there is no swap entry. Instead, the VMA itself tells me which file and which block of that file to read. I load that block into a frame, install it in the page table, and resume you.”

Alloca: “So at the PTE level, present=0 is just a signal: data is not in RAM. But the place to find it depends on what kind of mapping this is?”

Kernel: “Precisely. For anonymous memory pages that have been swapped, the non-present PTE can carry swap coordinates. For a file mapping that has not been loaded yet, I usually use the VMA to find the file and offset. Either way, the fault handler has enough information to reconstruct the page.”

Key Takeaway

When physical memory runs out, the kernel must reclaim frames. It selects pages that have not been accessed recently and evicts them. For anonymous pages (heap, stack, malloc), there is no file to fall back on, so the kernel writes the page’s contents to swap space on disk before freeing the frame. It then updates the PTE: the present bit is cleared to 0, and the remaining bits are repurposed to store swap coordinates (device number and page offset). These bits are ignored by the hardware; they exist solely as a private record for the kernel’s own fault handler.

When the evicted page is next accessed, the MMU finds present=0 and raises a major page fault. The fault handler reads the swap coordinates from the PTE, loads the page from disk into a fresh frame, reinstalls the PTE with present=1, and resumes the process.

However, a page fault for a file-backed mapping is handled slightly differently. Here, the VMA contains information about the file and the offset in the file needed to populate the frame.

Together, anonymous and file-backed mappings cover all the cases a fault handler encounters. Two questions decide which path it takes:

What type of mapping is this? Anonymous memory has no file behind it. File-backed memory does.

Why is the page absent? A first-access fault (i.e., the frame was never allocated), or the page was evicted due to memory pressure and now being accessed again.

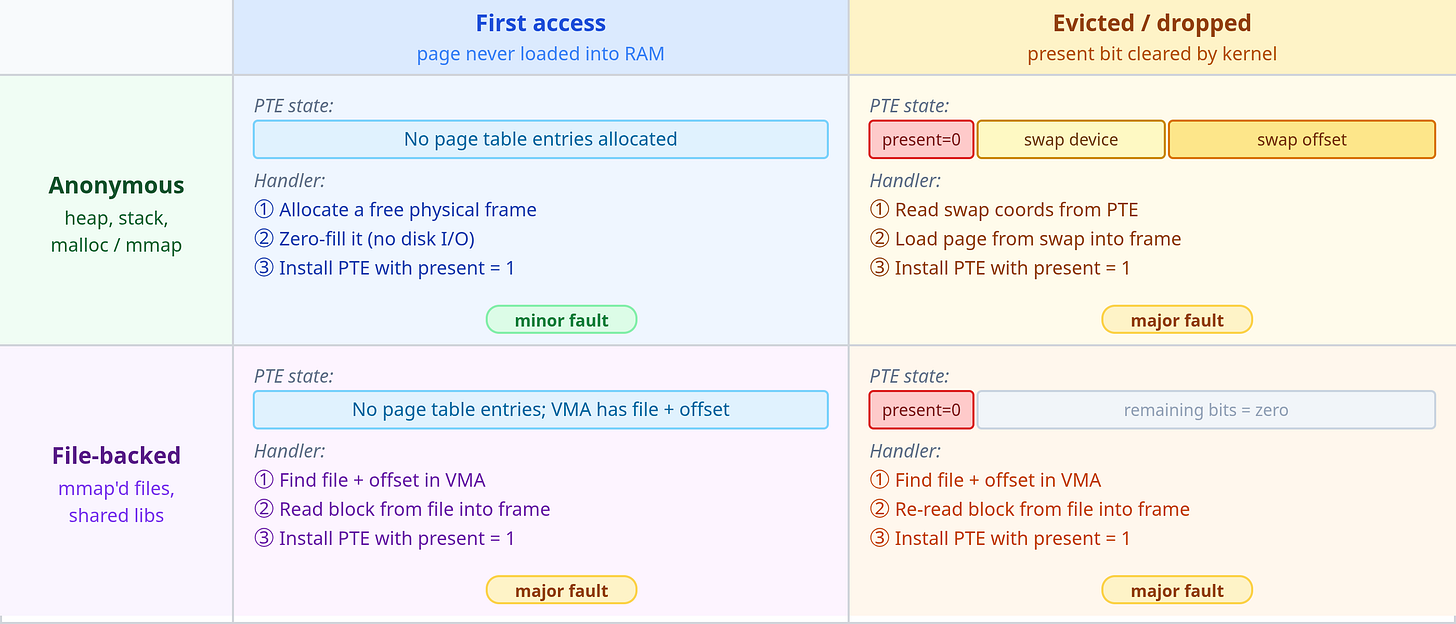

Figure 7 below shows all four combinations and how the fault handler resolves each

Aside: Pinned memory and GPU data transfers

Everything discussed so far assumes the kernel is free to evict any page when memory pressure demands it. There are cases where that is unacceptable. Pinned memory (also called page-locked memory) is memory that the kernel is prohibited from swapping out. A process can pin a region by calling mlock(), after which the kernel guarantees that the underlying physical frames will not be moved or reclaimed for as long as the lock is held.

The most common reason to pin memory today is GPU data transfers. DMA (Direct Memory Access) engines, which move data between host RAM and GPU memory without CPU involvement, require that the source or destination buffer remain at a fixed physical address for the duration of the transfer. If the kernel were to evict a page mid-transfer and reassign the frame, the DMA engine would read or write the wrong physical location. Pinning prevents this by fixing the physical address in place.

This is why AI training frameworks pin host memory for input batches. In PyTorch, torch.cuda.pin_memory() and the pin_memory=True option on DataLoader call mlock() under the hood. With pinned buffers, the CUDA driver can initiate DMA transfers directly from host RAM to GPU memory without an intermediate copy, and it can overlap those transfers with GPU computation. On large models trained on high-bandwidth interconnects (NVLink, PCIe 5.0), this overlap between data loading and compute is a significant contributor to overall throughput.

The trade-off is that pinned memory is a scarce resource. Because pinned pages cannot be reclaimed, overusing it reduces the memory available for the page cache and other processes, increasing the risk of swap pressure elsewhere.

Copy-on-Write and Fork

Alloca has been given a large job: process a large dataset. She needs help to do this in a quick amount of time.

Alloca: “I wish I had a copy of me that could share this workload.”

Kernel: “You can do that, just use the

fork()system call.”Alloca: “How does that work?”

Kernel: “When you call

fork(), I make a new process which is almost an identical copy of you. I give this process the same code as you, a copy your file descriptor table and even your memory.”

Alloca calls fork() and creates a new process called “Forka”. She inherits everything Alloca had.

Forka and Alloca start to do their work. Soon Alloca tries to perform a memory write. The familiar brief pause. Then it passes.

Alloca: “That pause. What was that?”

Kernel (appearing): “Another page fault.”

Alloca: “Another page fault? But the page is present, I’ve been reading from it just fine.”

Kernel: “It’s present, yes. But I marked it read-only, and you tried to write. That’s what triggered the fault.”

Alloca: “Wait, why did you mark it read-only? That memory was clearly meant for both reading and writing.”

Kernel: “It was an optimization I did when creating Forka. Let me explain why I did it.”

Alloca: “Please.”

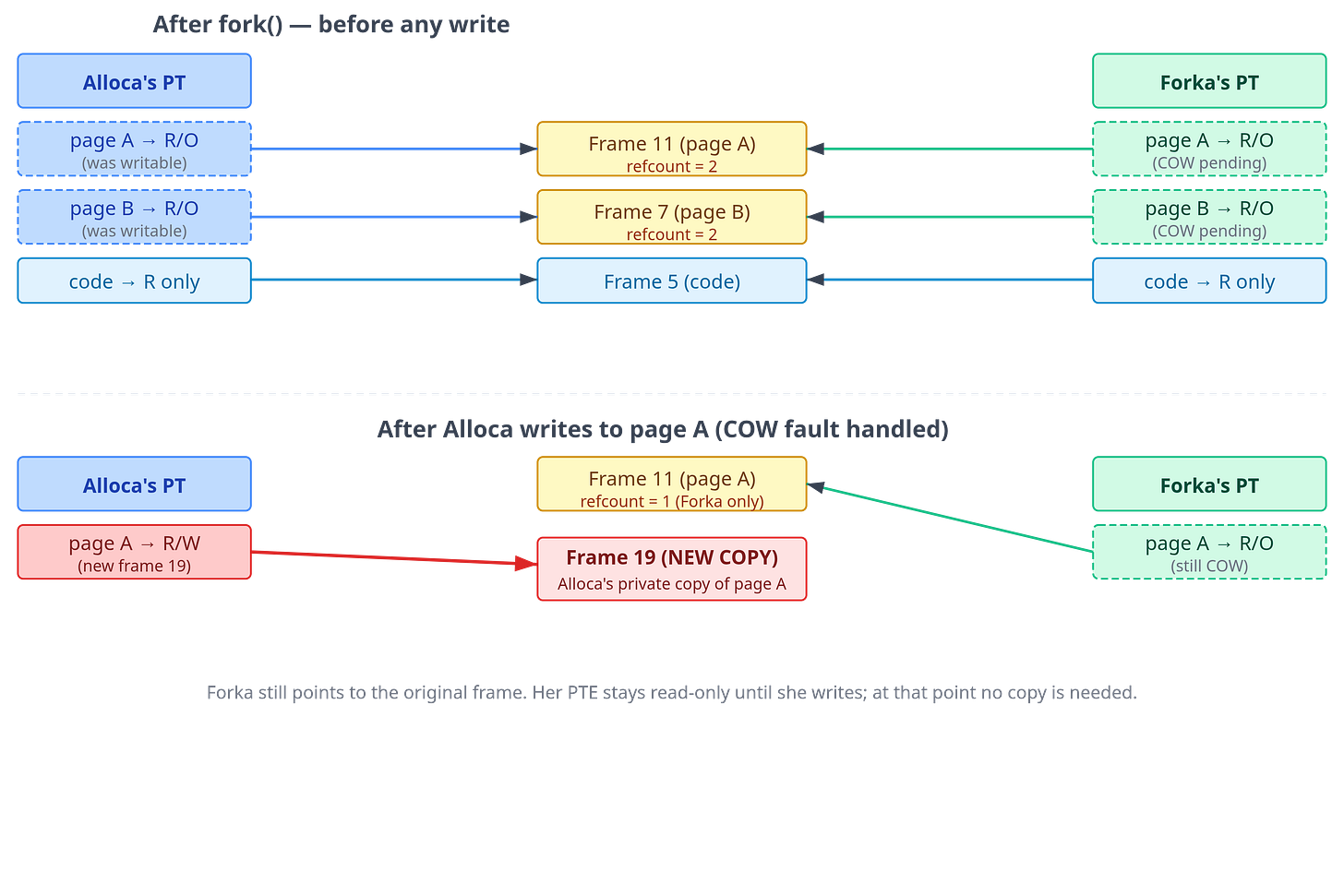

Kernel: “I created Forka by giving her an independent copy of your memory. The simple approach is to copy every page immediately. But you have gigabytes of heap, and most of it she may never write to. Copying all of it upfront would waste a lot of time, and also make fork extremely slow. So instead, I gave Forka new page tables that initially point at the same physical frames as you. Which means that both of you are sharing the same frames. But this only works as long as both of you are just reading those frames. When either of you need to write to one of these shared pages, page fault occurs and I give the writing process a private copy of that frame. This particular optimization is also called copy-on-write (CoW).”

Alloca: “So the read-only marking is how you detect that moment.”

Kernel: “Precisely. Your write triggered a fault, I caught it, confirmed this was a copy-on-write page, and handled it: I allocated a fresh frame, copied the 4 KB into it, updated your PTE to point to the new frame with write permission restored, and resumed your write. Forka’s mapping is untouched.”

Alloca: “And now we each have our own copy of that page?”

Kernel: “Yes. That page has been copied on write. But only that page. All the pages you haven’t written to yet are still shared. If you never write to a page, it stays shared forever, zero copies made.”

Forka: “What if my parent exits before I write to a page?”

Kernel: “I take care of that by tracking reference and mapping state for each physical frame. When your parent exits, I remove its mappings. The next time you write to a page, if I can see that the page is no longer shared, I can skip the copy and simply restore write permission on your existing PTE. There’s no one left to protect.”

fork(). Initially, both page tables point to the same physical frames (top). After Alloca writes to page A, the kernel allocates a new frame (19), copies the contents, and updates only Alloca’s PTE to point to the new frame. Forka’s PTE still points to the original frame and remains read-only; the kernel will restore write permission on Forka’s next write fault without needing to copy, because the frame is no longer shared.Aside: fork + exec: why process creation is cheap

A common Unix pattern is to call fork immediately followed by exec to load and execute a new program. exec discards the child’s entire address space and builds a fresh one for the new program. For example, this is how the shell works whenever you execute a command.

For this reason fork needs to be cheap and one way to achieve that is by avoiding the copying of parent’s memory pages until it is really needed.

Key Takeaway

fork() creates a new process (the child) that is an exact copy of the parent at the moment of the call. Naively, this would require copying every byte of the parent’s virtual memory, a multi-gigabyte operation for large processes. Copy-on-write (COW) makes fork() efficient by deferring that copy until it is actually necessary.

When fork() is called:

The kernel allocates a new process descriptor for the child.

The kernel creates a new set of page tables for the child, initially pointing to the same physical frames as the parent.

For every private writable mapping, the kernel marks the entry as read-only in both parent and child. Read-only pages (code) are shared as-is, they were already protected.

The kernel tracks reference and mapping state for each physical frame. After a fork, private pages that were writable in the parent are now mapped by both processes, so their state records that they are shared.

When either process subsequently writes to a COW-protected page, the MMU detects a write to a read-only PTE and raises a protection fault. The kernel’s COW handler:

Checks whether the page is still shared. If it is, a copy is needed. If the kernel can determine the faulting process is now the only relevant owner, it can simply restore write permission without copying.

If a copy is needed: allocates a new frame, copies the contents, updates the faulting process’s PTE to point to the new frame with write permission. The other process’s PTE is left pointing to the original frame, still read-only.

Memory-Mapped Files

Several cycles pass. Alloca is trying to analyze a large log file. She has been doing it the obvious way, calling read() in a loop, filling a buffer, processing the buffer, repeat. Kernel notices this and wanders over.

Kernel: “You know there’s a better way to do that.”

Alloca: “I’m reading a file. What better way is there?”

Kernel: “Instead of reading into a buffer, let me map the file directly into your address space. You access it like regular memory: just use a pointer, and I’ll handle getting the data to you.”

Alloca: “You mean I can read a file with a pointer? No

read()calls at all?”Kernel: “Exactly. Call

mmap(). Give me the file descriptor, the length, and some flags. I’ll create a new VMA in your address space (a memory-mapped region). Then you can read from or write to addresses in that region just like regular memory, and I’ll give you the file’s contents.”Alloca does it. She gets back an address,

0x7f4b00000000. She reaches out to read the first byte at that address.And the pause happens again. A little longer this time.

Alloca: “Longer pause. What was that?”

Kernel: “A major page fault. When you called

mmap(), I didn’t actually load any of the file data into memory. That file could be gigabytes in size, and I have no idea which parts you’ll actually access. So I just created a VMA for that address range and left the page table entries absent. The first time you accessed that page, the MMU found present=0, trapped to me, and I had to read it from disk.”Alloca: “So mmap is also lazy?”

Kernel: “That’s right. Demand paging works for files too. Now, notice where I put the data after reading it from the disk.”

Alloca: “Where?”

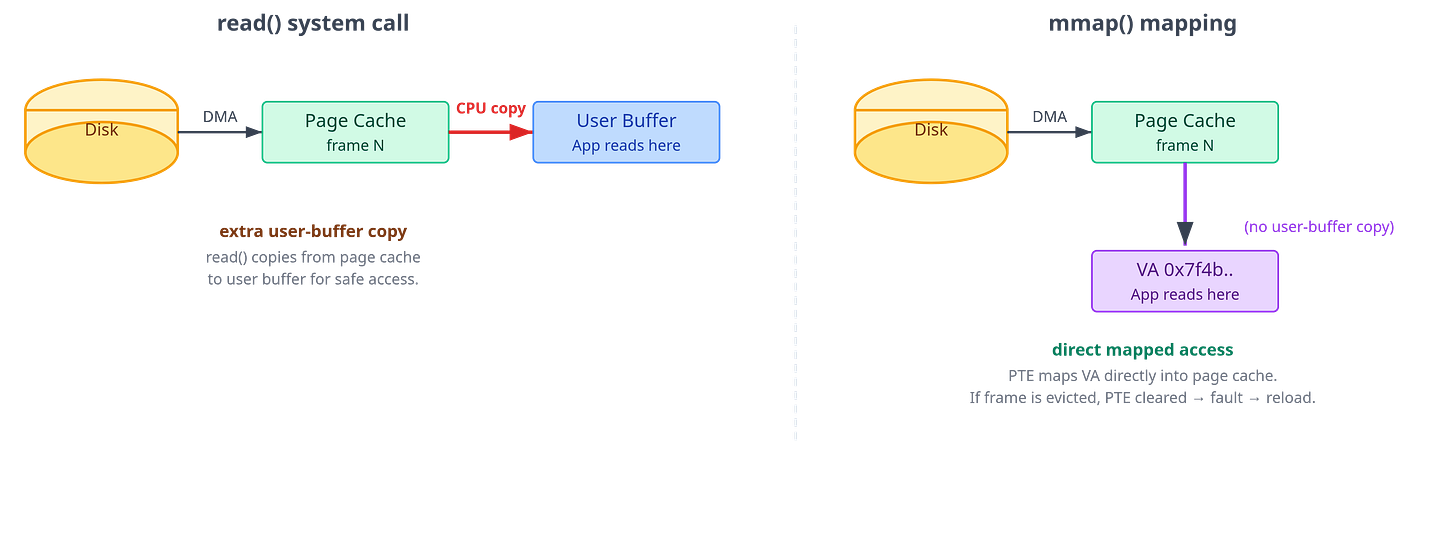

Kernel: “In the page cache. This is a pool of physical frames I use to cache file data. When a file page is read (whether via

read()ormmap()), it lands in the page cache. For your mmap access, once the data was in the page cache, I installed a page table entry pointing directly to that page cache frame. Your virtual address now directly maps to the physical frame that holds the file data.”

Aside: The page cache is not reserved memory

A common misconception is that the page cache is a reserved pool of memory, it’s not. It is simply the set of physical frames that the kernel is currently using to hold file data. When an application needs more memory and there are no free frames, the kernel can reclaim clean page-cache frames instantly, because the file on disk is already the backing copy. This is why a system that looks nearly full of “used” memory can still allocate freely: much of that “used” memory is reclaimable cache, not locked-in application data.

Alloca: “So I’m reading the file’s data directly from the page cache, through my page table?”

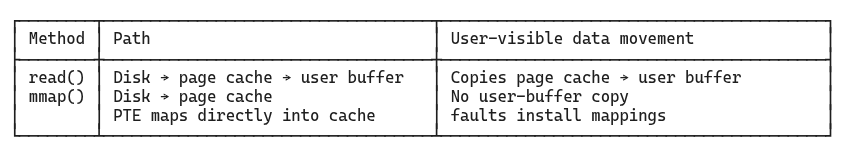

Kernel: “Yes. No intermediate user-space buffer copy. Now compare that to what happens when you use

read()instead. I still bring the file data into the page cache, usually by DMA from the storage device into memory. But thenread()copies the data from the page cache frame into your user-space buffer. That page-cache-to-user-buffer copy is the extra step thatmmap()avoids.”

Aside: What is DMA (Direct Memory Access)?

Normally, when a CPU wants data from a storage device or network card, it would have to sit in a loop reading bytes, which is an expensive waste of cycles. DMA is a hardware mechanism that lets peripheral devices transfer data directly into main memory (RAM) without CPU involvement.

In this scheme, the kernel and device driver submit an I/O request that describes the target memory pages and the storage range. The storage controller uses DMA to transfer data directly into those pages and interrupts the CPU when the transfer is done. The CPU is free to do other work the entire time.

Alloca: “And

mmap()avoids that second copy because I access the data directly through the mapped address. But what happens if you evict the page cache frame while it’s mapped?”Kernel: “Before I can reclaim that frame, I first remove the page table entry pointing to it. The VMA remains intact, so the next time you access that address the MMU finds no mapping, faults, and I reload the data. From your perspective the mapping is seamless; you never hold a dangling pointer.”

read() vs. mmap() I/O paths. With read(), data is brought from disk into the page cache and then copied into the process’s user-space buffer. With mmap(), the process’s PTE points directly into the page cache, eliminating that page-cache-to-user-buffer copy. The trade-off is that mmap() pays through page faults and page-table management instead of explicit read calls.Alloca: “So should I always use

mmap()for file I/O? Avoiding that user-buffer copy sounds like an obvious win.”Kernel: “Not always.

mmap()removes one cost, but it introduces others. It trades explicit I/O and copying for page faults, page tables, TLB pressure, and different failure modes. Whether that trade is good depends on the access pattern.”

Aside: mmap() is not automatically faster

The first access to a cold mapped page is still a page fault. The fault enters the kernel, locates the VMA, finds or reads the page cache page, installs a PTE, and resumes the faulting instruction. If you scan a huge file once, you may take one fault per 4 KB page, and those faults can dominate the page-cache-to-user-buffer copy you avoided.

read() and mmap() also expose different shapes of work. With read(), user space usually asks for a large buffer at a time, maybe 64 KB, 256 KB, or more. The kernel copies a contiguous chunk into that buffer and can issue readahead based on the file access pattern. With mmap(), readahead can happen too: when a fault reveals sequential access, the kernel may read surrounding file pages into the page cache, and may map nearby already-cached pages around the fault. But the control flow is still implicit and fault-driven. Cold pages still need faults to install mappings.

Mappings also consume page table memory, create TLB pressure, and may trigger TLB shootdowns when unmapped or when permissions change. Error handling is different too: if another process truncates a mapped file and you later touch a page beyond the new end, the kernel may deliver SIGBUS. With read(), you usually see an error return or a short read instead.

So mmap() is often attractive when access is random, repeated, shared across processes, or naturally pointer-based. read() is often competitive or better for simple sequential streaming, especially with large buffers. “Zero-copy” is not the same as “free”; the only reliable answer for performance-sensitive code is to measure the actual workload.

At that moment, Forka wanders over. She too needs to read the same log file.

Forka: “I’m going to mmap that same file. Same one you’re using, Alloca.”

Forka calls mmap(). She accesses the same page Alloca just read. But this time there is no pause.

Forka: “That was fast. Why no pause this time?”

Kernel: “Because that page is already in the page cache, it was loaded when Alloca accessed it. I just gave your page table an entry pointing to the same physical frame. You’re both reading from the same physical bytes. No disk I/O. No copy. Nothing moved.”

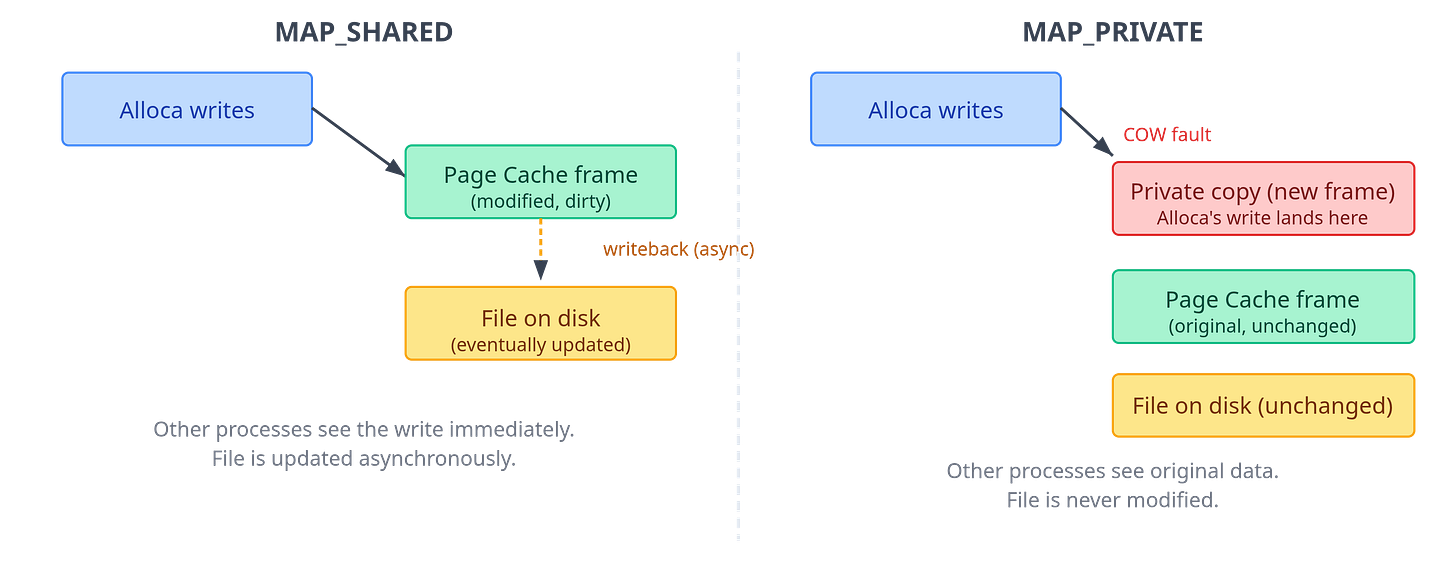

Alloca: “Wait, we’re both pointing at the same physical frame? So if I write to my mapped region, does Forka see it?”

Kernel: “That depends on a flag you passed to

mmap(). WithMAP_SHARED, your write goes directly into the shared page cache frame, so yes, Forka sees it. WithMAP_PRIVATE, your write triggers a COW fault and you get a private copy, same as afterfork(). The file is never touched.”Alloca: “And if I use

MAP_SHARED, when does the change actually reach disk?”Kernel: “It happens asynchronously. But, if you need to guarantee it has been written to disk, you call msync() or fsync().”

MAP_SHARED vs. MAP_PRIVATE write semantics. With MAP_SHARED, writes go into the shared page cache and are flushed to disk asynchronously. With MAP_PRIVATE, the first write triggers a COW fault; the process gets a private copy that diverges from both the file and other processes.Key Takeaway

mmap() is a system call that can be used to map a range of bytes from a file directly into a process’s virtual address space, creating a new VMA backed by the file. Subsequent reads and writes to that virtual address range behave exactly like memory accesses: the kernel’s page fault machinery handles loading data from disk on demand.

The central abstraction is the page cache: a kernel-managed pool of physical frames that holds recently accessed file pages. In the normal buffered-I/O path, file access via read(), write(), and mmap() goes through the page cache. The difference is how user space reaches those bytes: